The rise in social media platforms and usage has given marketers a compelling new avenue for conducting qualitative research. In this article, we explore learnings and best practices gleaned from pilots leveraging Google+ for consumer insights research. These include findings around recruiting and incentive strategies, a bevvy of user engagement tactics, different audiences and research questions, and the benefits and current challenges to using a social platform for this type of research.

The rise in social media platforms and usage has given marketers a compelling new avenue for conducting qualitative research. By design, social media platforms are created with the purpose of facilitating sharing and conversations. In addition, the ubiquity of social media means the learning curve for participants is in some ways, lower than when using a platform designed specifically for qualitative research. Activities such as posting, commenting, tagging, and sharing photos and videos are second nature to participants who use social media to communicate.

The Pilots

To test the viability of using social media to conduct structured, qualitative consumer research, we partnered with two research companies to run four pilot studies using Google+ in October 2012. We approached the pilots as actual studies around specific audiences with the intention to answer specific types of questions. In other words, we wanted to do our best to meet real qualitative research objectives using Google+. Doing so allowed us to generate learnings around:

Categories, Audiences, and Questions

To test whether or not an environment such as Google+ was better suited for some types of qualitative research over others, two different verticals aligned to two types of research questions were selected for the pilots.

For the categories and audiences, we selected the following:

- Wireless Carriers as a relatively high consideration purchase category with longer consideration periods. For this category, recent smartphone owners and wireless plan decision makers within families were chosen for the pilots.

- Consumer Packaged Goods as a lower consideration or more routine purchase category. For this category, new dads and "holiday makers" (those responsible for planning and cooking for the year-end holidays) were chosen for the pilots.

The two types of questions we sought to answer for those categories were:

- Distinct, pre-determined questions that tie to a consumer’s media consumption habits throughout the purchase cycle pre-purchase, as well as how a consumer uses a product post-purchase.

- Exploratory insights into a target audience such as values important to new dads. These questions would likely be less focused and have greater freedom to evolve over the course of the study building on what participants reveal.

We were able to synthesize activities that effectively addressed both types of questions. Some of the activities that were tested are detailed further on in this paper.

Study Length and Participant Recruitment

Pilot studies were divided between two five week long studies and two one-week long studies. Both sets of studies included daily engagement and discussions led by a moderator. On top of that, there were seven major events such as Hangouts, video journals, and shop-a-longs that took place for the month long studies, and five such events (one per weekday) for the week-long studies.

For recruiting, we wanted to test the feasibility of conducting research on specific, defined audiences instead of simply mining existing fans or public conversations for insight. As a result, participants were recruited from two different panels via screeners.

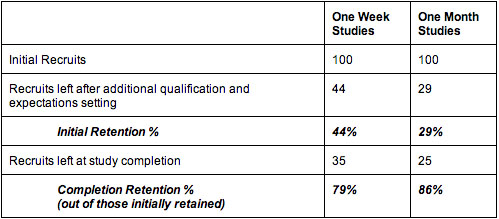

Out of the initial 100 participants recruited at the outset of each study, approximately 25% remained through the end for the one month studies and 35% remained through the end for the one week studies. In both cases, the primary point of drop off was between the initial screening and when recruits were re-qualified and given expectations and instructions. However, for the one month groups, a greater percentage of the remaining participants stayed until the end of the study. In addition, participants in the month long studies were asked to sign a release form that may have contributed to the higher initial drop off but also a potentially more committed group.

For all studies, participants were recruited from panels consisting of people more accustomed to taking surveys. Recruiting from focus group oriented panels in the future may yield higher retention rates.

Participation Incentives

Loss aversion and more traditional incentive strategies were both tested in the pilot studies to see if they produced different levels of participation. In all studies, credits or points were used that could be redeemed for cash at the end of the studies. In the new dads study, credits were awarded up front, but could be taken away if participants did not engage in scheduled events. In the other studies, participants started with zero points and were rewarded as they completed participation milestones. For both the new dads and recent smartphone owners studies, participants also had the chance to earn bonus points at the end if they demonstrated exemplary participation. In the end, we did not see a difference in retention rates using the two strategies. However, it is possible that one method could work more effectively with some audiences than others. Also, finding a way to keep current reward balances more "front and center" with participants may produce differences between the two strategies.

Outside of monetary incentives, we also experimented with other tactics to encourage engagement and participation. These included pre-planning and communicating upcoming events in the month long studies so that participants could make plans for when they could participate. For the longer studies, we used Google+ Circles to create "super users" groups of new dads and recent smartphone owners for those who were still actively participating halfway through the studies. Doing so also appeared to function as an intangible motivator, as the invited participants reported feeling "special" and "happy" about being part of this exclusive group.

Google+ Engagement Activities

A large part of our pilots were dedicated to testing various methods of engagement by creatively leveraging the different features available on Google+. These features include:

- Hangouts (group video chat that includes apps functionality)

- Events (a way to organize group activities)

- Photo and video uploading

- Location tagging

- +1-ing posts and comments to vote up favorites

- Mobile capabilities via the Google+ mobile app

- Text posting and commenting

- Asymmetrical sharing capabilities allowing for both group sharing and one-to-one sharing with a moderator

These included testing logical ways to group participants in an environment designed for asymmetrical sharing - we found self-contained Events to be the simplest way of doing so. While Circles provided for more flexibility, they also added some complexities. Communities, which were not yet available at the time of the pilots now offer a great way to organize participants into a self-contained study group along with all Events, posts, and discussions related to the study.

Engagement activities worked best structured as individual Google+ Events that participants were invited to. Events are self-contained "pages" on Google+ that correspond to a given activity, where discussions, photos and videos pertaining to the event could be aggregated in one place. Activities tested include:

- Live Hangouts with participants. Some Hangouts were conducted in a similar fashion to typical focus group discussions, while others involved specific activities like watching and evaluating ads on YouTube together or doing a group brainstorm using a third party Hangout app.

- Video diaries. Participants took video with their mobile devices and narrated along. These worked really well within an event as all of the uploaded videos were presented as a collage with a discussion panel on the side.

- Photo shop-a-longs. Participants were asked to use their mobile phones to take pictures of products, signs, and other imagery that resonated with them in stores and to talk about the pictures in comments.

- Behavior logs using Google Docs. Recent smartphone owners were asked to log their wireless data usage over the course of a day via a pre-designed Google Docs form linked within an event.

- Posted screenshots and live screen sharing in Hangouts. Capturing screenshots was used as a way for participants to walk us through their research process online. Live screen sharing was also used during a Hangout to show a document on the moderator's screen, but has the potential to be used for live walkthroughs with a Hangout group.

We found participants surprisingly open and candid with what they shared, and that the mutual sharing can lead to deep conversations and rich insights.

Conclusion

Google+'s design as a social media platform and not specifically a qualitative research platform presented both opportunities and challenges.

In terms of opportunities, the myriad of features designed for sharing and user interaction provide a rich palette from which to design creative and engaging participant activities. Participants reported liking the Hangout experiences and engagement with other participants. Some reported that the group sharing environment helped them learn more about the topics at hand and as a result, they found the experiences interesting - in contrast with more traditional methods that utilize mostly one directional sharing. Also, actions like sharing and posting "make sense" to participants already used to using social media in their daily lives. This was particularly true for more digitally savvy participants.

Challenges compared to more traditional qualitative research platforms included less control over what participants are exposed to and what they can and cannot do. For example, it is possible for participants to start Hangouts with each other without a moderator - which may or may not be a downside. We also ran the risk of participants accidentally sharing outside of the research group, but clear instructions helped to mitigate that. Having participants post directly within Events - and now Communities - also minimizes that risk. Lastly, Google+ does not have a back end specifically designed for pulling and aggregating participant interactions for post-study analysis.

Overall, we found Google+ to be a capable and readily accessible platform for qualitative research and feel that the social features open the door to exciting user engagement possibilities. Researchers using Google+ for qualitative research need to approach studies with an open mind and out of the box thinking. There are fewer user controls but a lot of flexibility for interacting with participants. Also, fully taking advantage of the capabilities available in Google+ means participants will be able to see each other's posts which may or may not color the information they choose to share. We found participants surprisingly open and candid with what they shared however, and that the mutual sharing can lead to deep conversations and rich insights.

This article originally presented at ARF Re:think 2013.